⚠️ PROCESSING EMOTIONS.EXE ⚠️

Status: Feelings detected. The dungeon is uncomfortable. Please stand by.

📢 SYSTEM ANNOUNCEMENT #0314

Emotional distress event detected in the engineering sector. Classification: identity-level. The dungeon is not equipped to handle feelings. The dungeon will try anyway.

There’s a specific kind of loss that nobody warned software engineers about. Not job loss — something quieter and weirder than that. It’s the loss of the thing you were good at.

A lot of people got into programming because they were good at it. Genuinely, measurably, satisfyingly good at it. There’s a specific pleasure in debugging a gnarly problem for three hours and finding the one line that fixes everything. In writing a clean abstraction that makes something complicated feel simple. In knowing — knowing — that you understand how a system works.

And now an AI does a lot of that. Faster. Without complaining about the ticket description.

For a lot of engineers, this isn’t just a workflow change. It’s a quiet identity crisis wrapped in a productivity gain. Which is a deeply strange thing to experience.

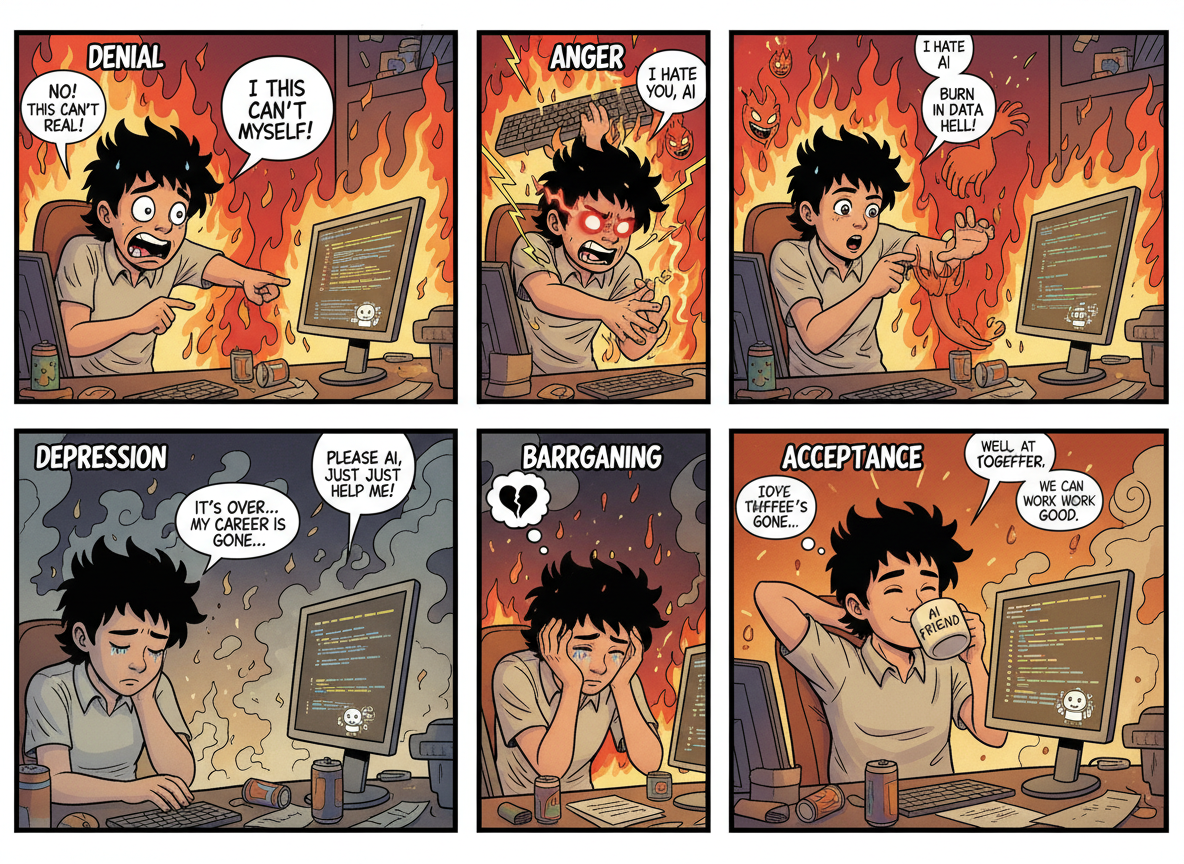

🔥 THE FIVE STAGES, DUNGEON EDITION

Stage 1 — Denial: “The output is garbage. I’d never write it this way.” (Sometimes true. Often just different.)

Stage 2 — Anger: “This is deskilling the whole profession.” (Worth discussing seriously. Usually discussed unseriously.)

Stage 3 — Bargaining: “I’ll use it only for tests. Just the boring stuff. Not the real code.” (The real code boundary moves every two weeks.)

Stage 4 — Depression: “If the AI can write it, did it matter that I could write it?” (This one’s actually worth sitting with for a minute.)

Stage 5 — Acceptance: “My job was never really about typing. It was about knowing what to build and why.” (This is where the good engineers land. Eventually.)

⚙️ THE PART THAT ACTUALLY MATTERS

Stage 4 deserves more than a bullet point. Because the question — if AI can do it, did it matter that I could? — is not a stupid question. It’s a real one.

The answer the dungeon would offer: yes, it mattered. You built the understanding that lets you evaluate what the AI produces. You developed the instincts that catch the subtle bug in the plausible-looking output. You accumulated the scar tissue from the production incidents that taught you why the “obvious” solution is sometimes catastrophically wrong.

The AI is fast and confident and frequently correct. It has no idea why the last three times someone tried this approach it caused a cascade failure at 3am. You know. That knowledge doesn’t go away because the tool changed.

The grief is real. It’s also, probably, survivable. The dungeon has seen worse.

⚠️ SYSTEM NOTE: The dungeon does not normally acknowledge feelings. The dungeon is making an exception. Do not make it weird. ⚠️

Leave a comment